Every organization operates between two truths: the intent documented in manuals, and the reality manifested by actual behavior. What people often call 'AI resistance' is, in fact, a systemic immune response or a mechanism triggered to protect the ambiguity necessary for its own survival. Understanding the gap between the 'surface structures' and actual decision-making is the first step toward 'Structural Honesty.

You did everything reasonably: leadership aligned and announced the plan, budgets were approved, consultants drew the flowcharts, vendors demonstrated the platform, training was completed, and pilot projects launched. However, the project eventually slowed down or even stalled entirely. Meetings proliferated, responsibilities became blurred, and people gradually realized that projects that looked seamless on slides were unexpectedly complex in practice. This is the reality of most AI pilot projects, and it has nothing to do with the technology itself.

The conventional logic suggests that because people resist what they do not understand, we need to strengthen communication, reinforce training, and strive to build support across the organization. On the surface, this intuition seems reasonable, but perhaps the starting point was wrong from the start. The true obstacles are implicit, existing at a level that cannot be seen on any slide.

How AI exposes hidden organizational decision structures

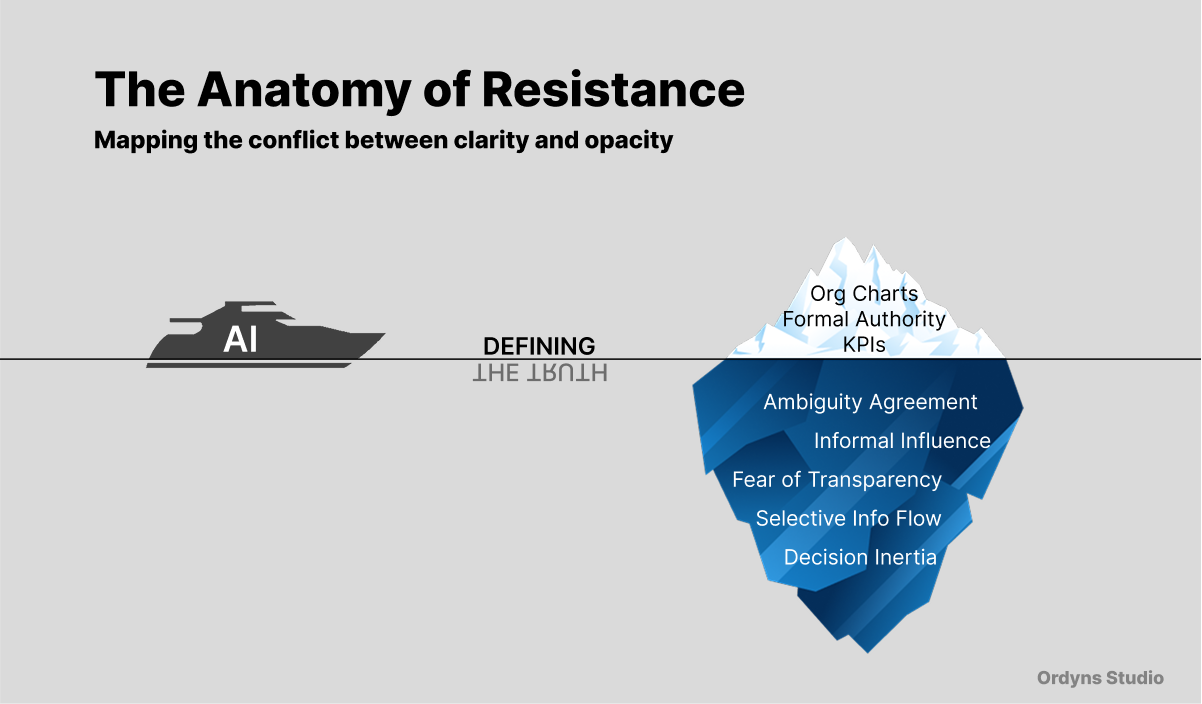

In reality, an organization functions on two levels. The first level is visible, consisting of org charts, reporting lines, and explicit responsibilities. It is the organization as it ideally imagines itself: clear, logical, and well-documented. The second layer is invisible where decisions actually happen. Here, human judgment carries more weight than job descriptions. Here, projects stall because no one wants to bear the consequences. Here, information is shared selectively, not because the process failed, but because sharing it would break a certain unspoken balance.

This invisible layer does not imply dysfunctional behavior; it is how organizations have long survived complexity. In many cases, ambiguity is not a bug but a mechanism quietly designed by people to protect themselves. For decades, most technologies interacted primarily with the visible layer. This included databases, workflows, and operating systems because they influenced processes rather than politics. Nevertheless, AI is fundamentally different.

Why AI transparency triggers organizational resistance

This scenario is all too familiar. An executive receives an AI-generated report pointing out that a key product line has underperformed for two consecutive quarters. The data is clear, the patterns are evident, and the recommendations are practical. After reading the report, he closes the tab. This is not because he does not trust the data; on the contrary, he does. The reality is that acting on that data would necessitate re-examining decisions made eighteen months ago, involving three departments, two external partners, and a budget discussion that never truly ended. In fact, everyone present at the time knew exactly what was happening, yet no one expressed it openly. The point is that this ambiguity was, itself, a tacit agreement.

Yet AI knows nothing of this. It sees the numbers but cannot see what is hidden behind them. Closing tabs may be a rational approach to some extent.

Why AI hits the organizational "Immune System"

This is not about any specific role. Our focus should not be on the CEO, the strategy team, or the project manager but on a more fundamental issue: as long as there is room for human judgment, people will use it. This occurs not out of malice but out of nature. Every decision involving others, every weighing of pros and cons, and every moment where influence can be quietly exerted belongs to this category. These are the norms of organizational life rather than exceptions. This is simply how organizations work.

Gray zones are not system vulnerabilities; they are part of the system itself, which is a universal phenomenon, at least for now. When AI systems begin to illuminate these gray zones, a shift occurs. This shift is not necessarily toward something better or worse but toward something different. Those who once worked comfortably in productive ambiguity now find themselves in a much clearer field of vision. This shift is not optional, nor is it a side effect that better change management could avoid. It is what inevitably happens when a system built around human opacity begins to interact with a technology grounded in clarity.

Why Structural Honesty is the prerequisite for AI implementation

Most organizations overlook one question, which is not which AI tools to deploy or how to manage the transition, but an earlier, more honest question: how much do we actually know about the decision-making processes within our organization?

This is not about the way it is presented in documents or the way leadership thinks it works, but the way it actually operates based on human behavior. It is about where power truly resides, where responsibility truly ends, and why those gray zones are quietly preserved for reasons no one officially admits. This is what I call "Structural Honesty": before AI arrives, the actual logic of how an organization functions is more important than the blueprint of how it is expected to function. Because AI must understand this, otherwise it cannot work properly.

Why organizations resist AI-driven transparency

Indisputably, the speed of technological development far outpaces the self-awareness organizations need to absorb it. Every week an organization goes without examining its own inner workings is another week AI deployment continues to drift. This gap cannot last forever. It is predictable that those organizations capable of seeing themselves clearly will hold a distinct competitive advantage when AI is embedded. This is not because the technology performs better but because the people within the organization have already learned how to operate with transparency.

The uncomfortable truth is that AI follows a clear logic, while organizations are built upon "human black boxes". Firing people because of this may not be a universal solution, and perhaps it is not a long-term one either, because ambiguity itself is a survival mechanism and a feature quietly designed to protect the system. The key question is this: when AI begins to automatically audit the hidden affiliations behind every procurement contract, directly challenging decisions based on "unspoken rules" rather than efficiency; when it flags resource allocations driven by managerial intuition or turf wars; when it pierces through departmental silos to expose risks that were once selectively hidden, the issue is no longer just about technical change. In the past, leaders could selectively ignore the impact of internal "human behavior", but in the future, this will become unavoidable unless the organization is willing to bear the risk of remaining complacent. This organizational deception is not just a social issue but functions like a structural causal game where ambiguity protects the status quo.

Who defines the truth in a data-driven organization

Ultimately, this is a fundamental conflict over who has the right to define the truth. If an organization is unwilling to deconstruct the true logic behind these daily behaviors, AI will continue to hit these invisible icebergs. It is not because the technology itself is blind, but because the organization has never truly understood the behavioral mechanisms beneath the surface. This is where the resistance to AI transformation truly lies. In this conflict, AI is exempt from blame; the fault lies entirely with human behavior.

True readiness for AI is not at the technical level, nor even at the conventional cultural level, but at the level of "Structural Honesty". Most organizations have yet to begin this conversation, and that is precisely what is urgently needed. In the next part, I will deconstruct how to map the actual survival logic and define the core framework of Structural Honesty.